this is an excerpt from Prof. Roger Pielke's (Colorado State University) weblog "Climate Science" 31 Jan 07

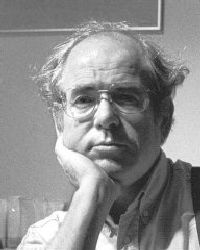

Hendrik Tennekes, retired Director of Research, Royal Netherlands Meteorological Institute, former Professor of Aeronautical Engineering at the Pennsylvania State University and internationally recognized expert in atmospheric boundary layer processes contributes another guest weblog today to Climate Science (see his first weblog on January 6, 2006). He has the professional qualifications and experience in climate science to comment on this issue.

Seventeen years ago, I wrote a column for Weather magazine, expressing my concerns about the lack of honesty, integrity and humility of many climate scientists. “I worry about the arrogance of scientists who claim they can help solve the climate problem, provided their research receives massive increases in funding”, reads one line from my text. Unknown to me, my friend Richard Lindzen was working on his famous paper “Some Cooling Concerning Global Warming”, which appeared in the Bulletin of the AMS at the same time. This was early 1990. It is 2007 now, and I want to ring the alarm bell again. There is a difference, though: then I was worried, now I am angry. I am angry about the Climate Doomsday hype that politicians and scientists engage in. I am angry at Al Gore, I am angry at the Bulletin of Atomic Scientists for resetting its Doomsday clock, I am angry at Lord Martin Rees for using the full weight of the Royal Society in support of the Doomsday hype, I am angry at Paul Crutzen for his speculations about yet another technological fix, I am angry at the staff of IPCC for their preoccupation with carbon dioxide emissions, and I am angry at Jim Hansen for his efforts to sell a Greenland Ice Sheet Meltdown Catastrophe. Speaking of Hansen, Dick Lindzen and I wrote a lighthearted April Fools’ Day parody of his concerns, which was published on Fred Singer’s SEPP website (search for Greenland Green Again) last year (view pdf). I can go on much longer, but I will keep my anger in check.

I am more than a little bit worried about IPCC’s preoccupation with CO2. The scientific rationale behind this choice is obvious. Sophisticated climate models have been running for twenty years now. It has become evident that these models cannot be made to agree on anything except a possible relation between greenhouse gases and a slight increase in globally averaged temperatures. The number of knobs that can be twiddled in the parameterization of the radiation budget is not all that large. Seemingly realistic results can be achieved without much intellectual effort. I agree with IPCC that there is a likely link between fossil fuel consumption and increased temperatures. But this is where the much proclaimed consensus ends. Just one example: the models do not include feedbacks between changing farming and forest harvesting practices and the atmospheric circulation. Partly for that reason, they cannot seem to agree on precipitation patterns. It so happens that precipitation is far more relevant to the world’s food production than a slight increase in temperature. I owe this insight to my good friend Denny (Dennis W.) Thomson at Penn State. Like me, he speaks from decades of experience. Denny is the oldest son of a world-renowned arctic lichenologist. He and his wife had the good fortune to grow up on farms in southwestern Wisconsin. Still closely bound to the earth and its delicate ecosystems, they live on a 600-acre farm in Halfmoon Valley on the southeastern flank of Bald Eagle Ridge. A physicist/meteorologist, and former head of Penn State’s meteorology department, Denny has witnessed climate change in progress for most of his life. At the same time he is deeply concerned about the veracity of “physics-challenged” climate models.

Why is it so difficult to make precipitation forecasts fifty years into the future? Most precipitation in the middle latitudes is associated with low-pressure systems, which move along storm tracks carved out by the jet stream. The ever-shifting meanders in the jet stream occur at the edge of the slab of cold air over the poles. The specialists call this slab the Polar Vortex, and have christened the meandering behavior of the jet stream in the Northern hemisphere the Arctic Oscillation. Thirty years ago I worked with Mike (John M.) Wallace and his PhD student N.C. Lau at the University of Washington in Seattle on problems concerning eddy-flux maintenance in the North Atlantic storm track. It is evident to all turbulence specialists that the dynamics of very slowly evolving states is different from the dynamics of instantaneous states. So the moment one asks what keeps the jet stream going, one encounters the kind of problem that is at the core of all turbulence research. But the mainstream of dynamic meteorology refuses to study the slow evolution of the general circulation. It has become so easy to run General Circulation Models on supercomputers that most atmospheric scientists shy away from matters like a thorough study of the interaction between the Polar Vortex and the Arctic Oscillation. Mike Wallace mailed me a year ago, saying that there is not a beginning of consensus on a theory of the Arctic Oscillation. This was one of the highlights in an advanced senior-citizens’ class on climate change I taught a year ago. It was announced as “A Storm in the Greenhouse”, referring primarily to the increasingly bitter debates of the past fifteen years.

How does this problem affect climate forecasts? If there is not even a rudimentary theory of the Polar Vortex, much less an established relation between rising greenhouse gas concentrations and systematic changes in the Arctic Oscillation, one cannot possibly make inferences about changes in precipitation patterns. We do not know, and for the time being cannot know anything about changing patterns of clouds, storms and rain. Holland’s national weather service KNMI circumvented this impasse last year by issuing climate change scenarios with and without changes in the position of the North Atlantic storm track. It did not occur to the KNMI spokesmen that they should have been forthright about their lack of knowledge. They should have said: we know nothing of possible changes in the storm track, so we cannot say anything about precipitation. But it is entirely consistent with the IPCC tradition to weasel around such issues. One of my contacts at KNMI recently explained to me that their choice was based on the increasing agreement between simulations run with different GCM’s. I had to answer that the IPCC spirit of consensus apparently was invading their supercomputers as well. It is bad enough that computer simulations cannot be checked against observations until after the fact. In the absence of a robust stochastic-dynamic theory of the general circulation, one cannot even check climate simulations against fundamental insights.

Actually, the monopoly of GCM’s in the climate research business is an interesting object of inquiry, and not just for sociological reasons. A GCM is a weather forecasting model in which the coefficients and parameterizations are tuned so as to obtain long-term results that have an air of realism. The model is then run for several tens of years. There are no penetrating studies of the way slight software mismatches might affect the average values of key output parameters fifty years from now. A forecasting model can make do with relatively crude parameterizations because the short-time evolution of the atmospheric circulation is primarily governed by its internal dynamics. Sloppy representations of boundary conditions, clouds, convection, evaporation and condensation do not mess weather forecasts up all that fast. But the long-term evolution of the general circulation is to a large extent determined by boundary conditions. This realization struck me with some force when I discovered last year that a simple algorithm for inversion rise above the daytime boundary layer I conceived in 1973 is still in wide use today. How can one be sure that an ancient forecasting algorithm is capable of performing the task assigned to it in climate models? At times it seems that no one in this business has learned about Karl Popper’s falsifiability demand. This is why I cringe at WCRP documents promoting Forecasting at All Time Scales. The obvious purpose of such propaganda is to defend the monopoly position that GCM’s have enjoyed for so long. It is strategy, not science. A whole generation of meteorologists is growing up with the idea that this is the only way to go. They were not exposed to Lorenz’ WMO monograph on the General Circulation, their faces turn blank when the terms Available Potential Energy and Eddy Kinetic Energy are used. Since they are offered no alternatives, they join those who claim that they need higher resolution and bigger computers. The job of having to think on one’s own feet is too hard to contemplate.

All of 2006 I have been corresponding with Tim Palmer, a leading scientist at the European Center for Medium-range Forecasts. The apparent focus of our discussion was the dynamics of vortex filaments around blocking highs. Palmer intuited that thin sheets of positive relative vorticity around a negative-vorticity core may serve to prolong the life of a high-pressure system. I felt this was an interesting hypothesis. For many years I have ridiculed the phraseology in which blocking highs are said to divert storms coming their way. More than once I have explained to a reporter that it would be equally appropriate to state that diverging storms sustain a blocking high.

Then came the rub. Thin vortex filaments can be simulated on a supercomputer only if the horizontal resolution is much improved. With the current mesh size of the ECMWF model at 40 kilometers if I am not mistaken, simulation of the vorticity microstructure in the troposphere would require a 10,000-fold increase in computer power. So this is how the propaganda for petaflop computing emanating from WCRP comes about, I thought. One hundred computers of the generation following the next would indeed generate the desired increase. This in turn would require a facility on the size of CERN, ITER, or the preposterous Superconducting Supercollider.

Is this what John Houghton, Bert Bolin, Martin Rees, the IPCC staff and the like are aiming for? I have parted the company of these power brokers many years ago, so I cannot begin to imagine what they are up to this time. Palmer has convinced me he is not their puppet, fortunately. We continued our correspondence. “So you’re really lobbying for a massive computer facility”, I wrote, “you participate in the same song and dance that has annoyed me for so long”. In my years as Director of Research at KNMI, the scientists around me honestly felt that my only job was to promote the early purchase of the next supercomputer. They were eager to collude behind my back with the hardware crowd at KNMI and salesmen from computer manufacturers. This often resulted in seemingly attractive discounts being offered around October, just when the salesmen had heard through the grapevine that a budget surplus would soon be reported to the Management Team.

I might have been sympathetic to Palmer’s ideas if he had argued in favor of a much better representation of ocean eddies, or the atmospheric boundary layer, or the climatic effects of changing farming practices. The dynamics of storm tracks and blocking highs is only one of many interactions demanding more research. It is certainly not appropriate to focus much climate computer power on just this one issue. That can be done better on specialized computers. In the case of blocking highs, a forecasting computer would fit best, because it is dedicated to the internal dynamics of the atmosphere. In my mind, a sense of balance was missing in Palmer’s appeal.

I want to lobby for decency, modesty, honesty, integrity and balance in climate research. I hope and pray we lose our obsession with climate forecasting. Climate simulations are best seen as sensitivity experiments, not as tools for policy makers. I said it in 1990 and I am saying it now: the constraints imposed by the planetary ecosystem require continuous adjustment and permanent adaptation. Predictive skills are of secondary importance. We should stop our support for the preoccupation with greenhouse gases our politicians indulge in. Global energy policy is their business, not ours. We should not allow politicians to use fake doomsday projections as a cover-up for their real intentions. If IPCC does not come to its senses, I’ll be happy to let it stew in its own juices. There is plenty of other work to do.

In 1976, Steve (Stephen H.) Schneider published a book entitled The Genesis Strategy. It made quite an impact on me at the time, primarily because Schneider did not promote technological fixes, but a global strategy of what is now called Adaptation, an idea reluctantly and belatedly embraced by IPCC. Those were the days of Nuclear Winter, weather modification, Project Stormfury, stratospheric ozone destruction, and the sick idea of seeding all Arctic ice with soot to prevent the next ice age. In the preface to his book, Schneider quotes Harvey Brooks, then Harvard dean of engineering:

“Scientists can no longer afford to be naïve about the political effects of publicly stated scientific opinions. If the effect of their scientific views is politically potent, they have an obligation to declare their political and value assumptions, and to try to be honest with themselves, their colleagues and their audience about the degree to which their assumptions have affected their selection and interpretation of scientific evidence”.

I rest my case.